AI Software Intern, Riva - NVIDIA (May. 2022 to Aug. 2022)

- Supervisor: Dr. Oluwatobi Olabiyi

- Will research deep learning and natural language processing techniques to build high-performance conversational AI services.

- Assist professor Jessica Ouyang on multi-scientific article summarization.

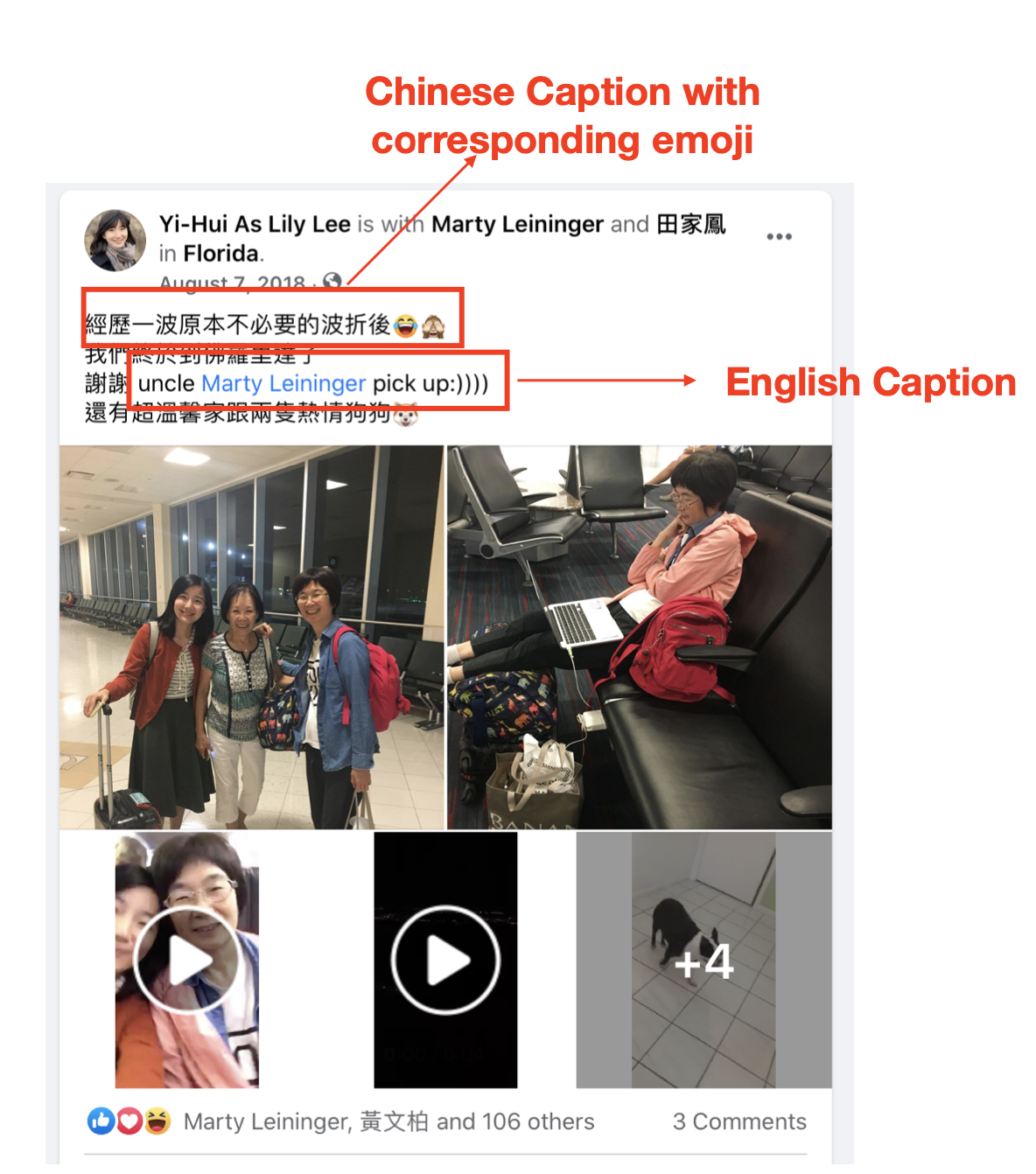

- Assist professor Shuang Hao on research programs that analyze and defend against fake content and identified disinformation campaigns.

- Adopted various deep convolutional neural networks, classified downsized MNIST, fine-tuned testing accuracy 80.84% in 4 mins.

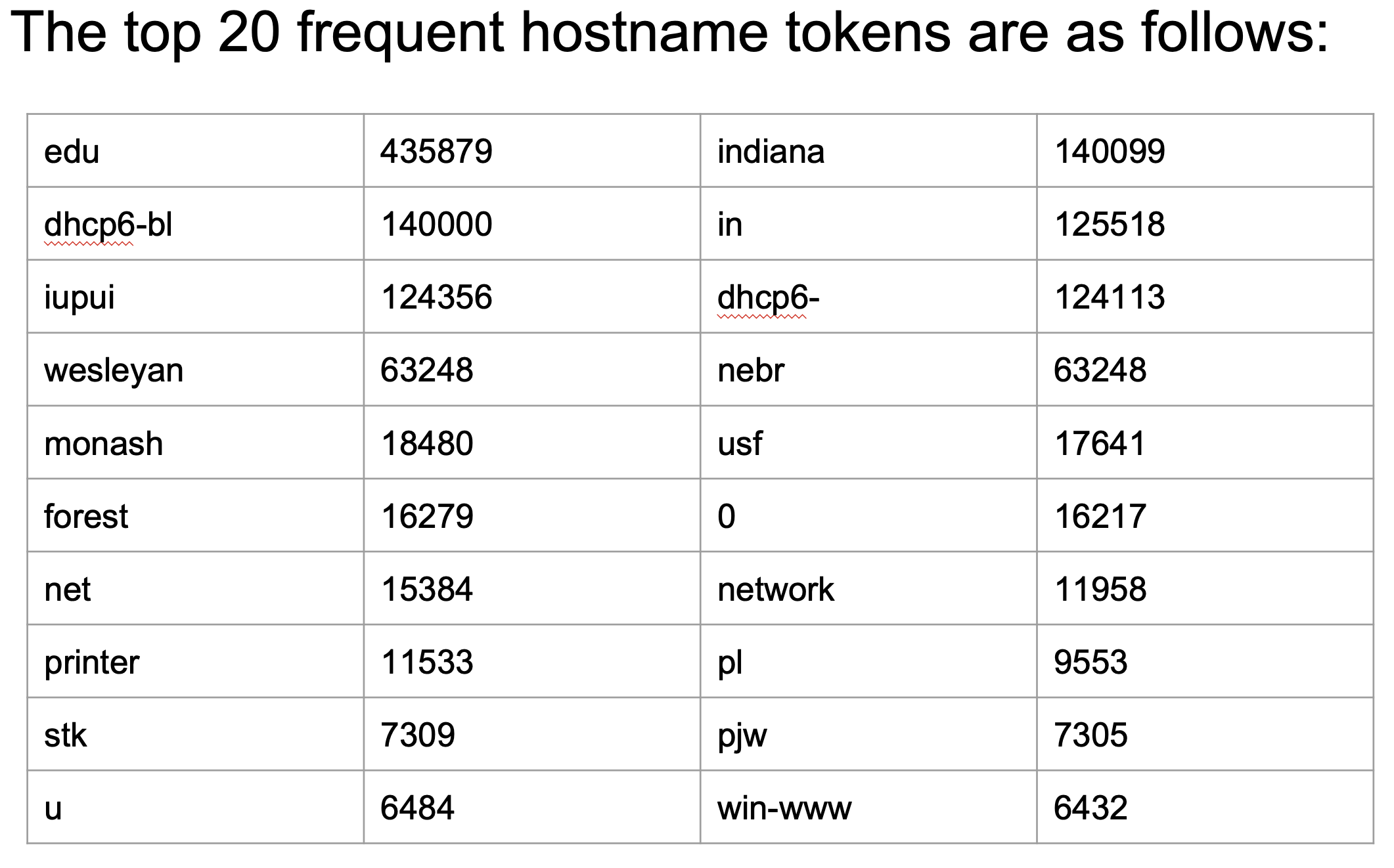

- Utilized NLP technique and clustering algorithm, defined naming patterns of the IoT device from domain names.

- Mentored a class of 70, moderated and evaluated NER research projects, accomplished 91.3% average accuracy rate.

- Course:

Natural Language Processing (Spring 2020)

Machine Learning (Fall 2020, Spring 2021)

Semantic Web (Fall 2019)

Discrete Mathematics (Summer 2019)

Software Project Planning and Management (Spring 2021)

- Chinese Knowledge and Information Processing (CKIP) LAB

- Mostly focus on Natural Language Processing, and my advisor is professor Wei-Yun Ma.

- Specific in following area:

a. Entity Linking: We cooperated with PIXNET to help them link the cosmetic product mentioned in the PIXNET blog. We designed a headword-oriented entity linking problem. We developed a product embedding model to solve the entity linking problem in cosmetic domain. Our model raised accuracy from baseline 64% to 83.4%. We published this work in LREC' 2020.

b. Information Extraction: Designed ”Improved Pattern Ranking Algorithm (IPRA)” and built an information extraction system in movie domain. Our algorithm improved f1 score from baseline 67% to 73.4%. We published this work in WWW DEMO session 2017 and received the Best Demo - Special Mention Award.

- Led a team of 15 people for industry-university cooperation, reduced 90% manual label time and cost for 1,000k+ products.

- Knowledge Discovery and Data Mining (KDD) LAB

- Mostly focus on Text Mining and Relation Extraction, and my advisor is professor Jia-Ling Koh.

- We extract the conditional relationship for diseases and symptoms by a web search-based approach. We published this work in Web Intelligence, December 2018.

Software Engineering Intern- IBM, Taiwan (Jul. 2015 – Dec. 2015)

- Debugged and detected in User Acceptance Testing stage, solved and troubleshot the insurance batch system based on Agile development system.

- Communicated between engineer, architecture, product manager. Confirmed specifications and technical details meanwhile maintained software requirements specification of the insurance system.

- Planed round table panel talk and participated in the business proposal for internal summer intern emotional cloud service competition.